Communication in Data Science. NumPy. Accountability in AI. Happy Thanksgiving! [DSR #113]

Happy Thanksgiving to all of the US-based Data Science Roundup readers! I hope you’ve had as relaxing of a long weekend as I have. Accordingly, I’m keeping this week’s Roundup on the shorter side.

If you read one thing this week, make it the post on communications.

Enjoy :)

- Tristan

❤️ Want to support us? Forward this email to three friends!

🚀 Forwarded this from a friend? Sign up to the Data Science Roundup here.

This is the single best article I’ve read on the communication of complex ideas. It introduces a concept (new to me) called the Curse of Knowledge, whereby it’s very difficult for someone who knows something to imagine what it is like to not know that thing. The implication:

It’s not enough to simply understand a subject and be able to produce true sentences about it. A skilled communicator must be able to craft each part of their message in anticipation of the audience’s state of mind.

The article goes much deeper into strategies for explaining something you understand but your audience does not. The author has even designed a course on the subject and links to two blog posts of his students that serve as exemplars.

Highly recommended.

Why you should forget ‘for-loop’ for data science code and embrace vectorization

Data science needs fast computation and transformation of data. NumPy objects in Python provides that advantage over regular programming constructs like for-loop.

Python operations are expensive, and NumPy provides a much faster alternative. If this is a new topic for you, this article is a must-read.

towardsdatascience.com • Share

Accountability of AI Under the Law: The Role of Explanation

How can we take advantage of what AI systems have to offer, while also holding them accountable? In this work, we focus on one tool: explanation.

This paper proposes that citizens have a legal right to an explanation of an AI decision in much the same way that they currently have a legal right to trial by jury.

Improving TripAdvisor Photo Selection With Deep Learning

Useful end-to-end story of problem identification to solution design and build to validation.

I’m increasingly seeing engineering teams incorporating pre-trained networks to short-circuit development time on deep learning features. These pre-trained networks could be the biggest accelerator of the next phase of deep learning deployment.

engineering.tripadvisor.com • Share

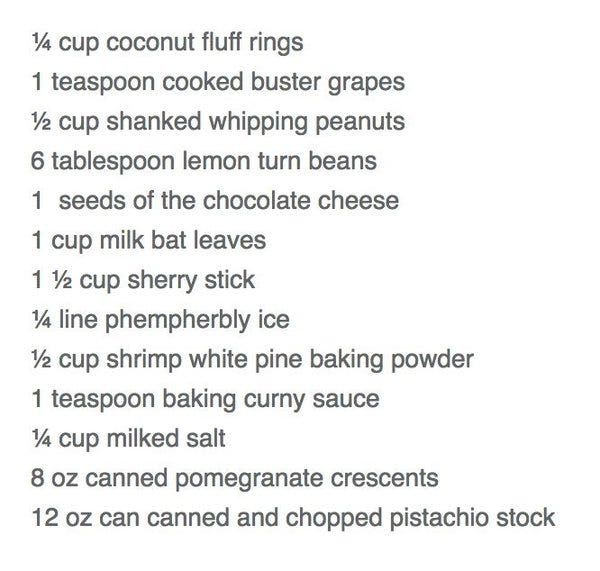

Algorithmic Cookery & Happy Thanksgiving

The recipe above was generated by Janelle Shane’s recipe-generating neural network, which, of course, is a thing. I found this entire article very amusing. Here’s one tip:

For the literary-minded, Janelle recommends training the neural network first on the works of your favorite author before feeding it recipes. Here is what happens when you use the works of H. P. Lovecraft:“Bake at 350 degrees for 30 to 32 minutes. Test corners to see if done, as center will seem like the next horror of Second House. Whip ½ pint of heavy cream. Add 4 Tbsp. brandy or rum to possibly open things that will never be wholly reported.”

Also—somehow I missed that FastForwardLabs (Hillary Mason’s data science consultancy, and the authors of this post) had been acquired by Cloudera back in September. I’m a bit surprised—it seemed like they had a good thing going on their own.

blog.fastforwardlabs.com • Share

Thanks to our sponsors!

Fishtown Analytics: Analytics Consulting for Startups

At Fishtown Analytics, we work with venture-funded startups to implement Redshift, Snowflake, Mode Analytics, and Looker. Want advanced analytics without needing to hire an entire data team? Let’s chat.

Stitch: Simple, Powerful ETL Built for Developers

Developers shouldn’t have to write ETL scripts. Consolidate your data in minutes. No API maintenance, scripting, cron jobs, or JSON wrangling required.

The internet's most useful data science articles. Curated with ❤️ by Tristan Handy.

If you don't want these updates anymore, please unsubscribe here.

If you were forwarded this newsletter and you like it, you can subscribe here.

Powered by Revue

915 Spring Garden St., Suite 500, Philadelphia, PA 19123