Data Science Roundup #80: Momentum, the Sentiment Neuron, and ML for Product Managers!

Interesting new research this week from OpenAI, Google, and UC Davis. Plus plenty of other good stuff. Enjoy 😀

- Tristan

Referred by a friend? Sign up here!

Two Posts You Can't Miss

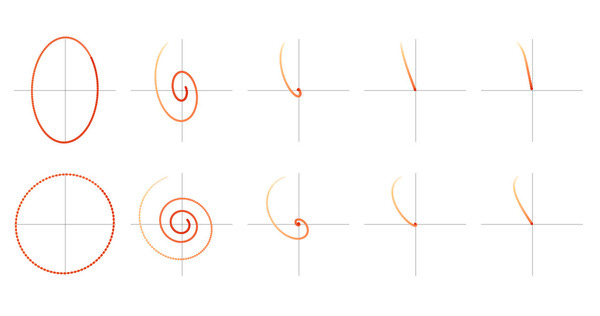

Momentum proposes the following tweak to gradient descent. We give gradient descent a short-term memory.

This is interesting, but it probably won’t change your world tomorrow. What is so novel about this paper is its format. This is one of the earliest pieces of research published on Distill, which I covered a couple of weeks ago, and its format, content, and tone are all quite novel within academic research. The writing is accessible, there are impressive interactive elements, and the design is attractive. Contrast that to the traditional academic PDF.

Data science is one of those rare fields where commercial practitioners are operating just on the edge of what has been proven in the lab. The ability to more effectively osmose new knowledge through this membrane will have very positive implications for the future.

We’ve developed an unsupervised system which learns an excellent representation of sentiment, despite being trained only to predict the next character in the text of Amazon reviews.

A linear model using this representation achieves state-of-the-art sentiment analysis accuracy on a small but extensively-studied dataset, the Stanford Sentiment Treebank (we get 91.8% accuracy versus the previous best of 90.2%), and can match the performance of previous supervised systems using 30-100x fewer labeled examples. Our representation also contains a distinct “sentiment neuron” which contains almost all of the sentiment signal.

Includes code and an excellent animated graphic.

This Week's Top Posts

Machine Learning for Product Managers

Creating products that use ML is an increasingly multi-disciplinary activity.

This is an amazing, non-technical post focusing on how to think about incorporating machine learning into products. Very worthwhile if you’re involved in product design.

Google Research: Federated Machine Learning

Federated Learning enables mobile phones to collaboratively learn a shared prediction model while keeping all the training data on device, decoupling the ability to do machine learning from the need to store the data in the cloud. This goes beyond the use of local models that make predictions on mobile devices by bringing model training to the device as well.

First steps towards a hive mind? 👽

research.googleblog.com • Share

Medical Image Analysis with Deep Learning

Much has been made over the ability of deep learning systems to diagnose disease from medical images. Ever wanted to check out the internals? This post is an awesome walkthrough.

Using R to study the Yemen Conflict with Night Light Images

This is just freaking cool. Take open data from NASA, add R and a talented data scientist and what do you get? A surprisingly sophisticated understanding of an ongoing military conflict.

In Defense of Interactive Graphics

Why does the NYT invest so heavily in interactive graphics? Their graphics editor:

Interactive graphics are not just a fun addition but can actually increase the transparency of our work, open us for criticism, and thereby, hopefully, help re-build some trust in journalism.

👍

Tapjoy Engineering: Real-time Deduping At Scale

At Tapjoy, analytics is core to our platform. On an average day, we’re processing over 2 million messages per minute through our analytics pipeline. These messages are generated by various user events in our platform and eventually aggregated for a close-to-realtime view of the system. (…) In this post we look at how we handled the at-least-once semantics of our Kafka pipeline through real-time deduping in order to ensure the integrity / accuracy of the data.

You may or may not need to solve a problem with this level of technical complexity today, but it’s important to understand just how hard it is to solve this type of problem well.

Analyzing Customer Feedback Using Machine Learning

At Fishtown Analytics, we’ve done plenty of work with quantitative NPS data: the 1-10 rating is incredibly valuable as a churn predictor and a valuable addition to your data warehouse. This post describes why and how to dig into your qualitative NPS data: the free text feedback you get along with the 1-10 responses. This free text field is a goldmine when you add in some fairly straightforward (and available in the cloud) machine learning.

DeepMind Solves AGI, Summons Demon

This morning, a group of research scientists at Google DeepMind announced that they had inadvertently solved the riddle of artificial general intelligence (AGI). Their approach relies upon a beguilingly simple technique called symmetrically toroidal asynchronous bisecting convolutions. By the year’s end, Alphabet executives expect that these neural networks will exhibit fully autonomous self-improvement.

Cute :)

approximatelycorrect.com • Share

Data viz of the week

Title: "Ride-hailing apps may help to curb drunk driving"

Thanks to our sponsors!

Fishtown Analytics: Analytics Consulting for Startups

Fishtown Analytics works with venture-funded startups to implement Redshift, Snowflake, Mode Analytics, and Looker. Want advanced analytics without needing to hire an entire data team? Let’s chat.

Stitch: Simple, Powerful ETL Built for Developers

Developers shouldn’t have to write ETL scripts. Consolidate your data in minutes. No API maintenance, scripting, cron jobs, or JSON wrangling required.

The internet's most useful data science articles. Curated with ❤️ by Tristan Handy.

If you don't want these updates anymore, please unsubscribe here.

If you were forwarded this newsletter and you like it, you can subscribe here.

Powered by Revue

915 Spring Garden St., Suite 500, Philadelphia, PA 19123