Experimentation at DoorDash. Data Tooling. Kubernetes. Deep learning on Spark? [DSR #131]

❤️ Want to support us? Forward this email to three friends!

🚀 Forwarded this from a friend? Sign up to the Data Science Roundup here.

The Week's Most Useful Posts

Switchback Tests and Randomized Experimentation Under Network Effects at DoorDash

Excellent writeup of a sophisticated experimentation methodology in a large network.

Given the systemic nature of many of our products, simple A/B tests are often ineffective due to network effects. To be able to experiment in the face of network effects, we use a technique known as switchback testing, where we switch back and forth between treatment and control in particular regions over time.

Fun fact: switchback testing was originally employed in an agricultural context, specifically for cow lactation experiments.

This reminds me of a post that Lyft wrote a while ago on their experimentation methodology. It turns out that naive A/B testing is insufficient in more complicated experimental environments.

3 Industry Leaders on the Future of Data Tooling

We reached out to three leaders in the data engineering field who are among those leading the charge toward our modular future. We wanted to know: What technology or tools in the data science and/or data engineering space are you most excited about right now and why?

👍👍

blog.modeanalytics.com • Share

The Scientific Paper Is Obsolete. Here's What's Next.

Saved you a click: it’s notebook computing. Notebook computing is incredibly common in data science today. Mathematica started it all, Jupyter made it massively popular, and today there are notebook products everywhere you look.

This article is fascinating for two reasons. First, this isn’t a post in a data science blog, or even a mainstream tech blog like Wired. No…this The Atlantic. I’m used to mainstream press writing breathy articles about AI, but when they do an in-depth piece about UI paradigms in data science tools, that’s unusual.

Second, the piece itself is a wonderful zoom-out on current trends that we’re all waist-deep in. I don’t know if I 100% buy that the notebook is replacing the scientific paper, but I do think that the mechanisms used to publish scientific information have massive impacts on the growth of human knowledge.

Introducing TensorFlow Hub: A Library for Reusable Machine Learning Modules in TensorFlow

TensorFlow Hub is a platform to publish, discover, and reuse parts of machine learning modules in TensorFlow. By a module, we mean a self-contained piece of a TensorFlow graph, along with its weights, that can be reused across other, similar tasks. By reusing a module, a developer can train a model using a smaller dataset, improve generalization, or simply speed up training.

Modularization and publishing are how computer science advances. It’s good to see efforts in that direction for ML; most ML code today is not particularly reusable.

Deep Learning With Apache Spark

Towards Data Science published this piece early in the week and sent me down a rabbit hole learning about parallelized deep learning execution and orchestration. Is it actually a good idea to do deep learning on Spark? Yahoo seems to think so, but they also have a famously large investment in Hadoop and HDFS. Tools like RiseML give you purpose-built orchestration tools that seem more native to the deep learning world.

This is an open topic for me, and one I’m definitely interested in reading more on.

towardsdatascience.com • Share

Deploy Elasticsearch with Kubernetes on AWS

I’ve talked about Docker a couple of times in the Roundup before. Containers are a very useful tool for data scientists, and you should really be able to get up and running with Docker for your personal use after a couple of hours with the docs. Not too bad.

Kubernetes (K8S) is an altogether different animal. If you’re not familiar, Kubernetes is a way to run clusters of Docker containers and allow them to do special things like fail over to each other and transparently add and subtract workers automatically.

Let me just say: Kubernetes is hard. Managing a K8S cluster is cutting edge devops work, and I didn’t include this post because I think you should march out and try to set one up. I do, however, think that it’s important for data scientists to understand the technology: it’s beginning to invade data science products left and right.

If you’re not familiar with K8S, this post will give you a sense for what’s involved in going end-to-end: from cluster creation to app deployment.

Department of Data Viz

I came across a couple of really novel / interesting examples of data viz in my travels this past week. Neither are probably applicable to work you’re doing today, but shelve them away as inspiration for the future.

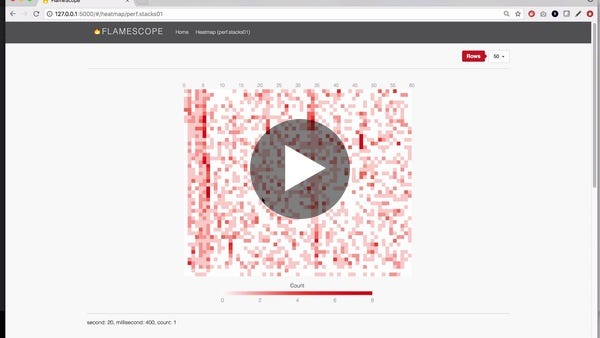

Netflix FlameScope - YouTube

Netflix just released a very cool interface for analyzing program performance data. I love the interaction paradigm, which you can see in the short video above: the interface allows the user to interact with two grains of data and toggle between them very naturally.

If you’re not familiar with a flame chart (I wasn’t!), check out this primer.

Gaze and Foot Placement When Walking Over Rough Terrain

This is one of the coolest data visualizations I’ve come across recently. Click through and take a quick look at the gif—your brain and your eyes are doing all of this, all the time, as you traverse rough terrain (and you’re not even really aware of it!).

The author spent four years on this research. Beautiful example of bespoke data visualization.

Thanks to our sponsors!

Fishtown Analytics: Analytics Consulting for Startups

At Fishtown Analytics, we work with venture-funded startups to implement Redshift, Snowflake, Mode Analytics, and Looker. Want advanced analytics without needing to hire an entire data team? Let’s chat.

Stitch: Simple, Powerful ETL Built for Developers

Developers shouldn’t have to write ETL scripts. Consolidate your data in minutes. No API maintenance, scripting, cron jobs, or JSON wrangling required.

The internet's most useful data science articles. Curated with ❤️ by Tristan Handy.

If you don't want these updates anymore, please unsubscribe here.

If you were forwarded this newsletter and you like it, you can subscribe here.

Powered by Revue

915 Spring Garden St., Suite 500, Philadelphia, PA 19123