Pre-Training NLP Models. Amazon ML Certification. Graph Theory. Planning Data Science Sprints. [DSR #164]

❤️ Want to support us? Forward this email to three friends!

🚀 Forwarded this from a friend? Sign up to the Data Science Roundup here.

This week's best data science articles

NLP's ImageNet moment has arrived

This is absolutely the must-read post of the week. The intro:

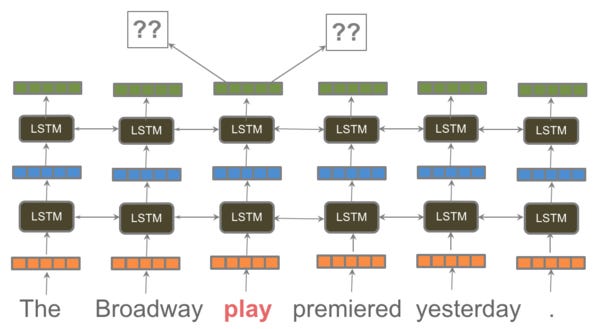

The long reign of word vectors as NLP’s core representation technique has seen an exciting new line of challengers emerge: ELMo, ULMFiT, and the OpenAI transformer. These works made headlines by demonstrating that pretrained language models can be used to achieve state-of-the-art results on a wide range of NLP tasks. Such methods herald a watershed moment: they may have the same wide-ranging impact on NLP as pretrained ImageNet models had on computer vision.

I don’t follow NLP super-closely, but apparently these breakthrough results have been piling up over the course of 2018. I also hadn’t been deeply familiar with just how influential ImageNet was:

Transfer learning via pretraining on ImageNet is in fact so effective in computer vision that not using it is now considered foolhardy.

If this transition is real, it’s significant: advances of this import come along rarely. From the conclusion:

In light of the impressive empirical results of ELMo, ULMFiT, and OpenAI it only seems to be a question of time until pretrained word embeddings will be dethroned and replaced by pretrained language models in the toolbox of every NLP practitioner.

AWS Training and Certification - Machine Learning

Amazon made waves this week for making their internal ML training courses public. From the release:

Dive deep into the same machine learning (ML) curriculum used to train Amazon’s developers and data scientists. We offer 30+ digital ML courses totaling 45+ hours, plus hands-on labs and documentation, originally developed for Amazon’s internal use. Developers, data scientists, data platform engineers, and business decision makers can use this training to learn how to apply ML, artificial intelligence (AI), and deep learning (DL) to their businesses unlocking new insights and value.

I haven’t checked it out—if you spend any time with the material I’d love to hear your thoughts.

How many years do we lose to the air we breathe?

The average person on Earth would live 2.6 years longer if their air contained none of the deadliest type of pollution, according to researchers at the University of Chicago’s Energy Policy Institute. Your number depends on where you live.

It’s quite tragic to learn that people in several districts in Delhi lose 11 years of their lives to air pollution. This interactive data visualization from The Washington Post is another impressive piece of work; they’ve been doing a lot of great stuff recently.

www.washingtonpost.com • Share

Uber: Montezuma's Revenge Solved by Go-Explore

Today we introduce Go-Explore, a new family of algorithms capable of achieving scores over 2,000,000 on Montezuma’s Revenge and scoring over 400,000 on average! Go-Explore reliably solves the entire game(…)

Big announcement from Uber. And this follow-on post written by a Google software engineer is excellent in contextualizing the results.

The controversial nature of the release is in the ability of the simulator to initialize to any desired state and begin learning from there. This allows Go-Explore to do a much better job at exploring the solution space. But is this initialization assumption realistic / useful?

Not sure how applicable this is to your day-to-day, but I found the combination of both posts very interesting.

On Agile, Trello, and the ‘dryness’ of ideas

This is a unique, fascinating read. Long and winding, but with many worthwhile ideas. The author is a data scientist who works with corporate clients. Here’s my favorite insight:

Increasingly I’ve become convinced that the most valuable part of an agile workflow is the part that happens in between sprints. Figuring out what to do next is actually the hardest, and most important part. I’ve adopted the general rule that Sprint Review can and should happen immediately after the end of a Sprint, and Sprint Planning should be the meeting that immediately precedes the sprint. But those two meetings should never be on the same day.

In our quest for productivity, we often move right on to the “next” unit of work without giving ourselves the space to pause and examine our course of action. In the exploratory journey of a data science project, it’s simply not possible to plot the entire course up front.

towardsdatascience.com • Share

Amazon Redshift and the art of performance optimization in the cloud

Very interesting blog post by the CTO of Amazon, Werner Vogels. In it, he discusses how the Redshift team uses fleet telemetry to improve performance. Apparently there has been a 3.5x gain in query throughput over the past 6 months due to small incremental improvements derived from this approach. Pretty great.

I still feel like Redshift has the wrong architecture for the cloud, and that Snowflake and BigQuery will continue to win ground with time. But this post shows that there is quite a lot of forward momentum for Redshift. If you’re a current user, this is very worthwhile.

www.allthingsdistributed.com • Share

This is a series of four posts on graph theory. The series progresses from history (which turns out to be very interesting) to graph notation to network theory, and then gets into real world applications.

Data practitioners are frequently well-versed in many mathematical and statistical areas, but are often under-exposed to graph theory. If that sounds like you, this is a solid, digestible intro. Graphs are everywhere.

towardsdatascience.com • Share

Thanks to our sponsors!

Fishtown Analytics: Analytics Consulting for Startups

At Fishtown Analytics, we work with venture-funded startups to build analytics teams. Whether you’re looking to get analytics off the ground after your Series A or need support scaling, let’s chat.

www.fishtownanalytics.com • Share

Stitch: Simple, Powerful ETL Built for Developers

Developers shouldn’t have to write ETL scripts. Consolidate your data in minutes. No API maintenance, scripting, cron jobs, or JSON wrangling required.

The internet's most useful data science articles. Curated with ❤️ by Tristan Handy.

If you don't want these updates anymore, please unsubscribe here.

If you were forwarded this newsletter and you like it, you can subscribe here.

Powered by Revue

915 Spring Garden St., Suite 500, Philadelphia, PA 19123