PyTorch vs. TensorFlow. Analytics Engineering. Near-Real-Time ELT. Postgres 12. Snowflake MVs. [DSR #202]

❤️ Want to support this project? Forward this email to three friends!

🚀 Forwarded this from a friend? Sign up to the Data Science Roundup here.

This week's best data science articles

The State of Machine Learning Frameworks

Wow. This is a really fantastic overview of the space right now. It essentially comes down to a PyTorch vs. TensorFlow conversation, where:

PyTorch has the research market, and is trying to extend this success to industry. TensorFlow is trying to stem its losses in the research community without sacrificing too much of its production capabilities. It will certainly take a long time before PyTorch can make a meaningful impact in industry - TensorFlow is too entrenched and industry moves slowly.

The article is actually quite accessible—highly recommended reading.

Facebook Has Been Quietly Open Sourcing Some Amazing Deep Learning Capabilities for PyTorch

The PyTorch 1.3 release post had a fairly understated tone. Ok! Another release. But it actually has some fantastic stuff in it:

Arguably, the most impressive capability of PyTorch is how quickly it has been able to incorporate implementations about new research technique. Not surprisingly, the artificial intelligence(AI) research community has started adopting PyTorch as one of the preferred stacks to experiment with new deep learning methods. The new release of PyTorch continues this trend by adding some impressive open source projects surrounding the core stack.

The ML community has been interested in explainability and privacy for years; it’s great seeing first-class support for cutting edge projects in these spaces from PyTorch.

towardsdatascience.com • Share

When did analytics engineering become a thing? And why?

“Analytics engineer” is a job title that has started to take off in the community—we now see dozens of new job posts a week with that title, up from 0 in 2018. It’s become a critical role on the modern data team, and this post by Claire Carroll explains why.

!!

This release provides application developers with new capabilities such as SQL/JSON path expression support, optimizations for how common table expression (WITH) queries are executed, and generated columns.

CTE query execution optimization is a huge win for folks who are using pg as a data warehouse. This is awesome :)

Logs & Offsets: (Near) Real Time ELT with Apache Kafka + Snowflake

Wowowow. Convoy has implemented a near-real-time ELT pipeline across their entire data stack while staying SQL-first in the transformation layer. It’s Snowflake’s streams and tasks that enable the real-timiness in the T layer, while Debezium + Kafka handle the data ingestion (they had to move off of an unnamed managed ingestion service due to increasing latency as dataset sizes grew).

I’ve written a lot over the years about the modern ELT stack and this is the first post where I’ve seen a meaningful improvement to the reference infrastructure we’ve been building for clients since 2016.

Snowflake | Using Materialized Views to Solve Multi Clustering Performance Problems

I rarely link to posts by vendors specifically about their own features. BUT: this is a really good one.

Snowflake recently released Materialized Views. MVs are a somewhat standard feature in the traditional database world—I started working with Oracle at 8i and they were standard even back then. But Snowflake is the first cloud data warehouse to support them, which has a bunch of people asking “what do I do with these things?”

This post is a brilliant example of an ideal use case: creating what Vertica calls projections. Projections are essentially copies of an underlying dataset that have different config properties: they’re clustered differently, have a filter applied, or some other optimization. This helps dramatically when querying extremely large tables (1b rows or more), and MVs provide a super-easy way to keep one or more projections up-to-date with the underlying table.

Features like this are really important if we want the cloud data warehouse world to fully subsume the on-prem world. And, before you ask: no, dbt doesn’t support Snowflake MVs out of the box, but you absolutely could make your own materialization to support them. I plan on playing with this over the coming week and will be sure to post in Slack if I come up with anything useful.

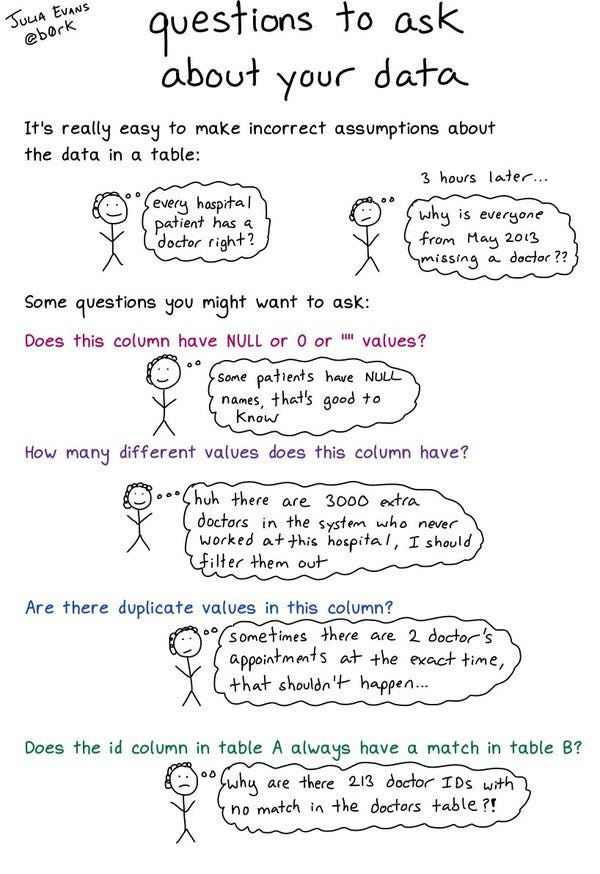

questions to ask about your data https://t.co/KUHtaFwSNh

Thanks to our sponsors!

dbt: Your Entire Analytics Engineering Workflow

Analytics engineering is the data transformation work that happens between loading data into your warehouse and analyzing it. dbt allows anyone comfortable with SQL to own that workflow.

Stitch: Simple, Powerful ETL Built for Developers

Developers shouldn’t have to write ETL scripts. Consolidate your data in minutes. No API maintenance, scripting, cron jobs, or JSON wrangling required.

The internet's most useful data science articles. Curated with ❤️ by Tristan Handy.

If you don't want these updates anymore, please unsubscribe here.

If you were forwarded this newsletter and you like it, you can subscribe here.

Powered by Revue

915 Spring Garden St., Suite 500, Philadelphia, PA 19123